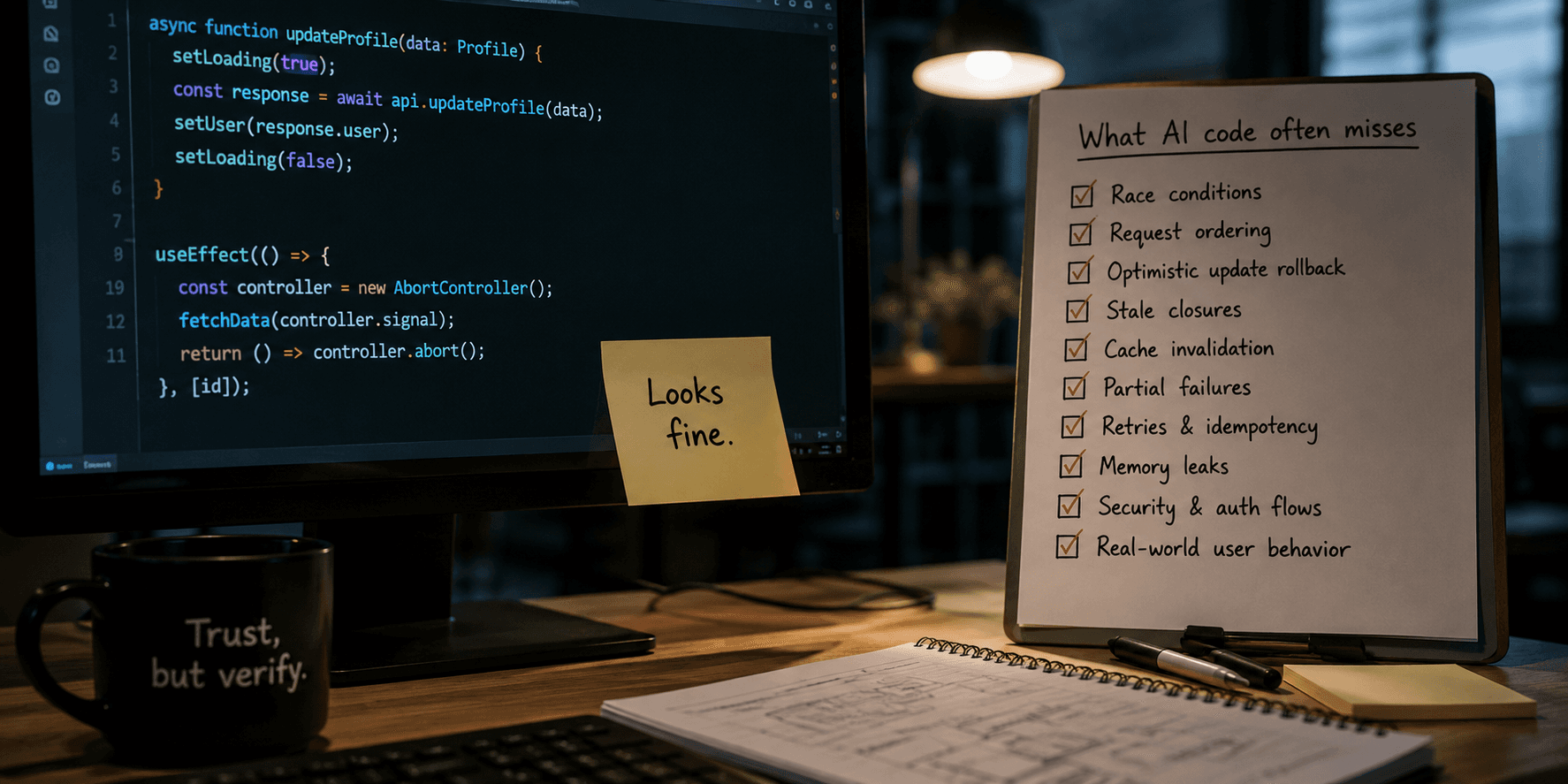

The biggest problem with AI-generated code isn’t bugs. It’s false confidence.

There’s a specific type of code that looks correct at a glance, passes review, ships to production, and then slowly starts breaking in ways nobody can explain immediately.

AI is extremely good at producing that type of code.

Not broken code. Convincing code.

That’s the real issue.

The code looks fine. That’s the problem.

AI-generated snippets usually have all the right signals:

clean TypeScript types

well-named functions

“correct” architectural separation

neat async flows

comments that explain intent

You read it and your brain just goes: “yeah, makes sense”.

That feeling is dangerous.

Because “makes sense” is not the same as “works under load, concurrency, retries, partial failures, and weird user behavior”.

Most production bugs don’t happen in the happy path anyway.

AI is excellent at the happy path.

Example 1: race condition disguised as clean async code

Classic case:

async function updateProfile(data: Profile) {

setLoading(true);

const response = await api.updateProfile(data);

setUser(response.user);

setLoading(false);

}

Looks fine.

Now add real world behavior:

user clicks save twice

request A and B run in parallel

B finishes first

A finishes later and overwrites fresh state with stale data

No errors. No crashes. Just inconsistent state.

AI rarely accounts for request ordering unless explicitly forced. It optimizes for readability, not concurrency safety.

Example 2: optimistic updates that silently lie

AI loves optimistic UI:

setTodos((prev) => [...prev, newTodo]);

await api.createTodo(newTodo);

Looks modern. Feels responsive.

Now reality:

request fails

rollback is missing

UI stays “correct” even though backend rejected it

user retries → duplicates

What you end up with is not a bug report.

It’s data corruption that nobody notices immediately.

Example 3: stale closures in React that pass review

This one is everywhere.

useEffect(() => {

const interval = setInterval(() => {

console.log(count);

}, 1000);

return () => clearInterval(interval);

}, []);

AI often generates this kind of thing because it “looks correct React-wise”.

But it locks in stale state. You get frozen values, weird UI desyncs, and logic that behaves differently depending on render timing.

The worst part: nothing crashes.

So nobody investigates.

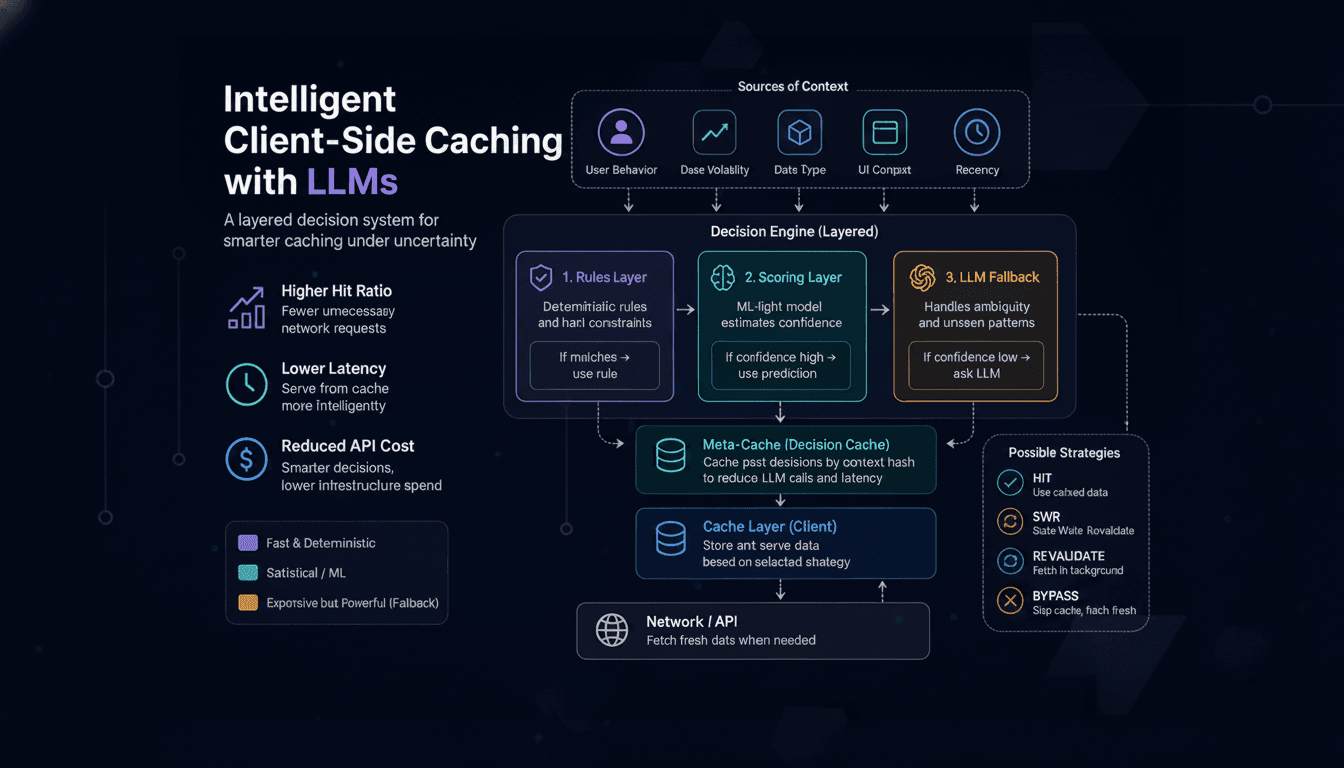

Example 4: caching logic that works until it doesn’t

AI-generated caching logic tends to assume:

keys are stable

invalidation is trivial

data shape never changes

Example:

const cacheKey = `user-${userId}`;

if (cache[cacheKey]) return cache[cacheKey];

const data = await fetchUser(userId);

cache[cacheKey] = data;

return data;

Then reality hits:

user permissions change

backend updates partial fields

two services mutate same entity

cache becomes subtly wrong, not obviously wrong

Now you have stale data that looks valid.

Those are the hardest bugs to trace.

Example 5: hallucinated APIs that look real

AI sometimes invents APIs that feel legitimate:

await auth.refreshSession({ force: true });

Or:

storage.invalidateAllQueries();

It compiles. It reads well. It passes code review if nobody checks docs carefully.

Then runtime explodes.

This is worse than syntax errors because it survives until execution.

Example 6: hidden memory leaks in “clean” abstractions

AI loves abstraction:

useEffect(() => {

const controller = new AbortController();

fetchData(controller.signal);

return () => controller.abort();

}, [id]);

Now extend this pattern across 20 components, some nested, some conditional.

Suddenly:

aborted requests still resolve

listeners accumulate

components remount without cleanup consistency

memory usage slowly climbs

Nothing obvious breaks. Everything just becomes heavier over time.

The real issue: AI code looks “reviewed by default”

That’s the psychological shift.

Before AI:

- you read code carefully because writing it took effort

After AI:

code already looks finished

types exist, structure exists, naming is clean

reviewer bias kicks in: “this is probably fine”

So instead of questioning logic, people verify surface correctness.

That’s a downgrade in engineering behavior.

Junior developers are the most exposed

A pattern that shows up repeatedly:

AI generates full modules

junior dev copies it

it works locally

nobody fully understands it

later changes are applied blindly on top

After a few cycles:

nobody owns the mental model anymore

debugging becomes guesswork

system behavior is “observed”, not understood

This is how codebases rot without obvious breakpoints.

Where this actually becomes dangerous in production systems

The risk is not isolated code snippets. It appears when AI-generated patterns are repeated across a system without a shared mental model of failure modes.

Auth and session management

Small mistakes here scale into security issues:

incorrect token refresh sequencing under concurrent requests

race conditions between logout and background API calls

silent session reuse after partial invalidation

The problem is not implementation complexity, but timing assumptions that are rarely tested.

Payments and idempotency

AI tends to implement “happy path payment flows”:

no strict idempotency key enforcement

retry logic without deduplication guarantees

inconsistent handling of partial failures between client and server

At scale, this produces duplicates or lost transactions without immediate visibility.

Caching and state consistency

Simple cache layers become unreliable when:

invalidation rules are implicit or scattered

multiple write paths exist for the same entity

backend evolves but cache keys remain static

The result is not crashes, but silently incorrect data propagation.

Distributed and async systems

AI-generated logic often assumes:

immediate consistency

reliable request ordering

single-source-of-truth execution

These assumptions break under retries, queue delays, and partial failures, leading to behavior that is correct locally but inconsistent globally.

Frontend concurrency + backend coupling

Even in UI code, issues emerge when:

optimistic updates assume success ordering

multiple concurrent mutations affect shared state

server responses arrive out of order but are applied directly

The system appears stable, but state drift accumulates over time.

The actual problem is not code quality — it is reduced verification depth

AI does not just generate code. It generates implementations that satisfy surface-level correctness:

types are valid

structure is clean

naming is consistent

patterns look familiar

This creates a strong cognitive bias: the code is assumed to be already validated.

As a result, engineering behavior shifts:

fewer explicit failure simulations

less reasoning about concurrency and edge cases

reduced attention to invalid states

acceptance of “looks correct” as a proxy for correctness

This is not a tooling problem. It is a review process degradation problem.

Final takeaway

AI speeds up writing code.

But it also makes bad code feel structurally correct.

And when something looks correct, developers stop interrogating it deeply enough.

That drop in skepticism is more dangerous than actual bugs.